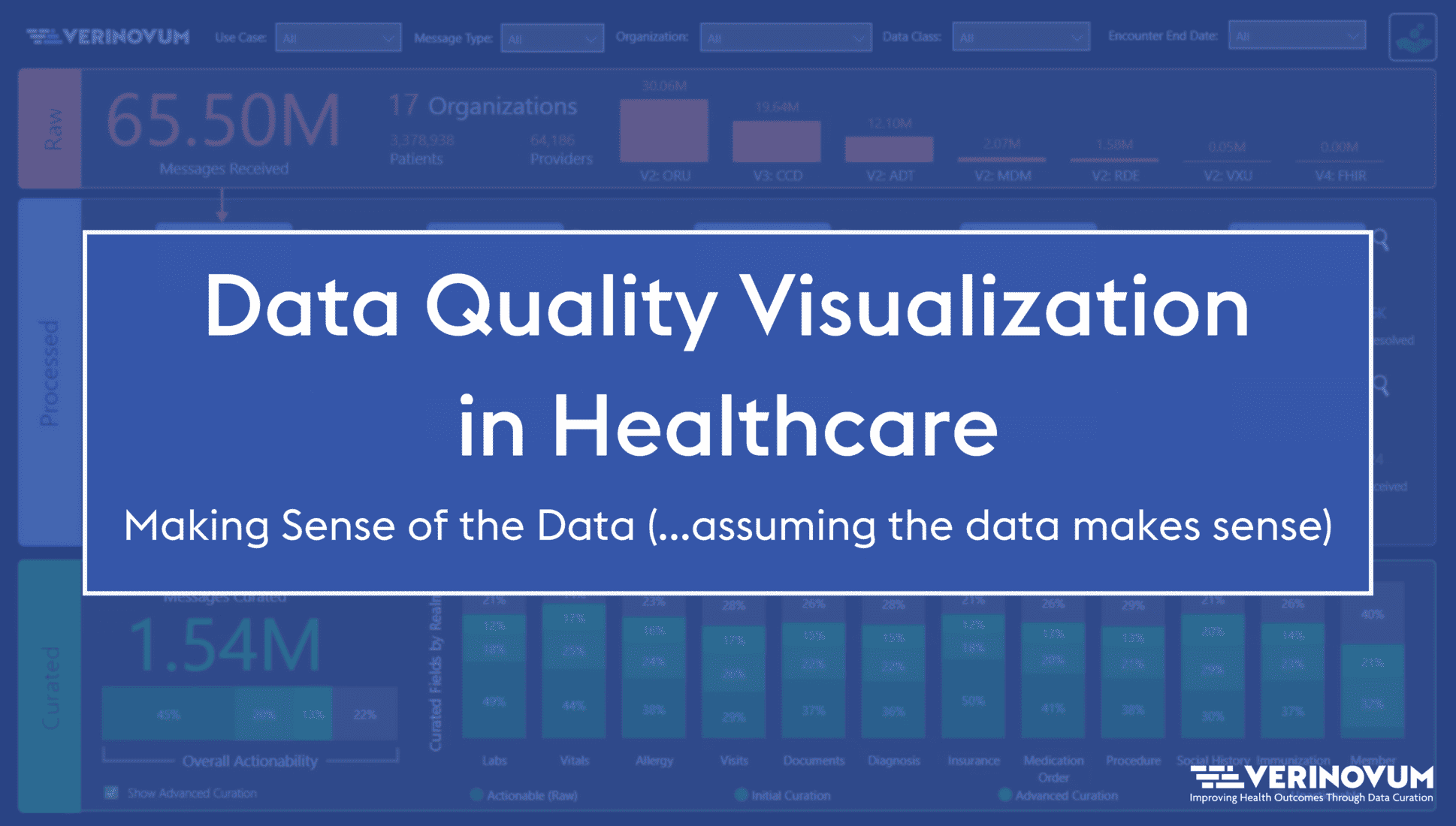

Data Quality Visualization in Healthcare: Making Sense of the Data (…Assuming the Data Make Sense)

Learn how providers and payers use data visualizations to make the most of quantitative analytics, evaluate care programs, and improve patient outcomes.